Image by Julius Drost.

In recent years, these models improved immensely and finally perform at such a level where we can make use of them. At least in most tasks, in others, there is some visible room left for improvement. So, whether you dislike providing alternative text for website images, would like a better searchable image database, want to develop a chatbot with multimodal abilities or are visually impaired, here’s how vision-language models can make your life easier.

What can Vision-Language models do for you:

In general, this form of AI can do most things that are related to image and text, be it as an input or output. If you are thinking of image classification, aka telling you what objects are in the picture by the form of a label, I dare you to think bigger. The classic Computer Vision task of image classification might include textual labels, but since they can be discretised, this is hardly a multi-modal problem.

Image Captioning

Think about more complex tasks such as getting a good description of the image: a coherent sentence instead of a single label. It remains true that “an image says more than a thousand words” but a sentence is a good starting point. Automatically generated image captions can be used for a multitude of things: augmenting image searchability with text input, and providing good alternative texts for images on websites. Writing alt-text is most people’s least favourite part about writing a blog, however, it does improve SEO scores and, even more impactful, it helps the visually impaired to enjoy a blog with its full potential. Granted, captions are limited to photos and illustrations and do not yet include elaborate flow charts and graphs, but Rome wasn’t built in a day.

Figure 1: Caption: two dogs play in the snow in winter clothes (Image by Jan Walter Luigi)

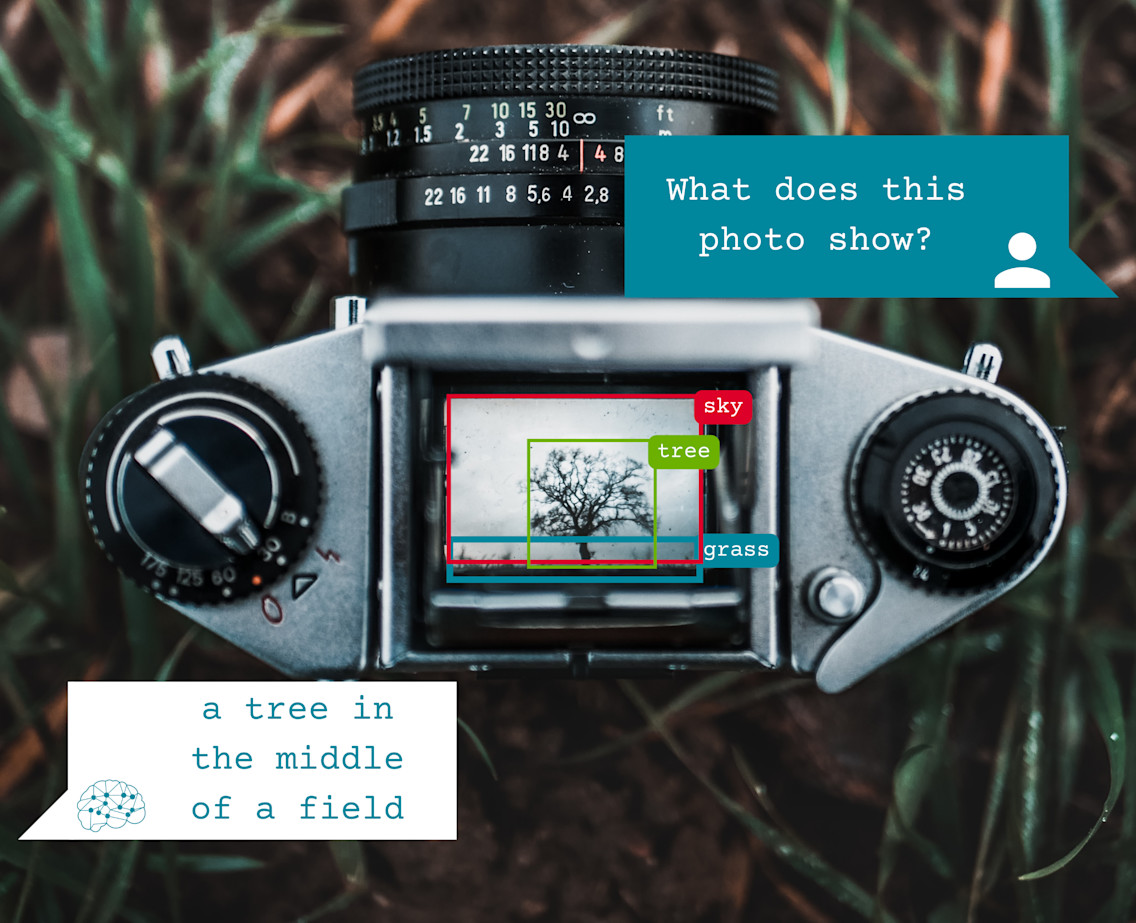

Visual Question Answering

“What colour is this shirt?” or “What number is on this coin?” are valid questions a person with poor vision might ask someone in search of help and there are even apps for that. But why rely on people, when you can rely on technology? Then vision question answering can help answer any questions about objects in an image, their size, shape, colour, quantity or relation to each other.

Figure 2: Question: “What plant is this?” Answer (BLIP): Cherry Blossom. Caption: a blue sky with pink flowers on the branches (Image by Melanie Weidmann)

Visual Dialog

Very related to visual question answering is visual dialogue. The only difference is that there is some dialogue that comes before the posed question. For example, if you were asking a chatbot to explain an image to you, the question “and what is there below it?” does not make sense by itself. With visual dialogue, the model receives this information and can therefore give an informed answer as it can deduce what “it” refers to based on the previous dialogue. This is especially helpful for chatbots and smart communication but also a challenging problem to solve.

Figure 3: Question: “What is in the big jar?” Answer (BLIP): “cookies” Question 2: “What is next to it?” Answer: “stack of books” Caption: a couple of shelves that have some food on top of them (Image by Callum Hill)

There are also Vision-Language problems that are not as relevant when it comes to helping people. Instead, they help us understand compositional semantics and give a deeper insight into how algorithms and Ais work and understand our world.

Text-To-Image

Text-to-Image generation is one of those tasks, although it can also be seen as a nice “show-off” task. It is the task of giving the AI model a few words to visualise an image like a photoshop artist. This image is supposed to look as realistic as possible, however, current state-of-the-art often gives you something that resembles something your untalented friend drew, not entirely wrong but now exactly what you expected. Some images even look as if said friend never saw the object but you are doing your best to explain it to them. Just look for yourself:

Input: “a black dog with curly fur”

Output of Latent Diffusion by CompVis:

Figure 4: Caotion: a collage of photos of the same black dog

You can try it yourself on hugging face.

Visual Reasoning

Another task that lets us gain insights into compositional semantics in visual reasoning. This task is less showy when compared to text-to-image but just as impressive. The objective of this task is to compare two images to each other and give a natural language sentence describing their similarities and differences.

Figure 5: Input: "Both images show the same amount of dogs, all of them are running." Output: True.

Caption: Left: a couple of dogs running down a dirt road. (Image by Alvan Nee) Right: two dogs play in the snow in winter clothes (Image by Jan Walter Luigi)

The AI to help you:

Many AI models can solve one or many of these vision-language tasks, but recently two models have prided themselves in solving most of these tasks with only one model and outperforming most of the previous state-of-the-art models.

BLIP

A well-known model that was released in February of 2022 by Salesforce is called BLIP, which is short for Bootstrapping Language-Image Pre-training. This model consists of four modules each tasked to learn a different job but working together to solve many complex visual-language tasks. Making use of language-image pre-training, this model is trained on a variety of datasets of images and captions. However, it can also be fine-tuned for specific tasks such as visual question answering, visual reasoning and visual dialogue. Compared to previous models, BLIP outperforms most of these on the aforementioned tasks.

With BLIP a new method called CapFilt is introduced. This approach improves noisy image-text caption datasets, a common problem for web-crawled images and their alternative text. This approach can be used for fine-tuning a pre-trained model for image captioning. Making use of a stochastic sampling method (called Nucleus Sampling) the model can produce multiple different captions for one image. This behaviour can also be turned off when switching over to Beam Search instead of sampling. You can try BLIPs image captioning yourself on hugging face. If you want to know more about the inner workings of BLIP, you can read the paper or look at the Github repository.

Another article about how you can use BLIP for image captioning as someone with or without an IT background, will be following shortly.

(All image captions and alt-text of this article were generated by BLIP.)